Sometimes, it's completely legitimate and expected to see duplicate api requests and dbops entries in the same second (like if an operation is called two or more times, deliberately), so it would be unacceptable for the log ingestion agent to treat identical log entries as duplicates, BUT i wouldn't want the log ingester to rescan an entire file and try to ingest all its entries again if the system restarted.

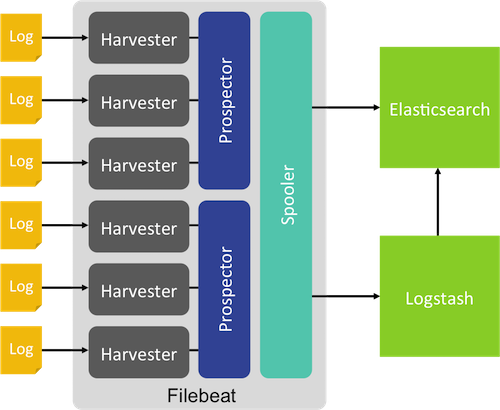

If for some reason I need to reconfigure/restart filebeats or restart the machine, how does filebeats pick up where it left off? Does it remember the last ingested timestamp for a given pattern of files? (in this case, i might have files to ingest named api-*-*.log along with error-*-*.log and dbops-*-*.log) While this is sufficient in normal operations, but during peak hours, it does not seem to scale to 10-12k EPS. output.logstash: hosts: 'localhost:31311' .,logstash,Kibana. Özellikle elastic ile ilgili ksmlarn anlatmnda çok detaya girmedim. FileBeat at no point of time crosses more than 7k-8k EPS. The intention is to display ads that are relevant and engaging for the individual user and thereby more valuable for publishers and third party advertisers. I need to retain these logs on the filesystem for no less than a week, so I have logfiles named api-20230222-22.log, api-20230222-23.log, api-20230223-00.log, etc. Merhabalar Suricata kurulumunu ele aldm yazm sizlerle paylayorum. Marketing cookies are used to track visitors across websites. We’re running on-premises, and already have log files we want to ship. using Elasticsearch as a managed service in AWS). This is good if the scale of logging is not so big as to require Logstash, or if it is just not an option (e.g.

output.file: path: /tmp/filebeat filename. The logs have fairly standard "YYYY-MM-DD HH:mm:ss" timestamps. We’ll ship logs directly into Elasticsearch, i.e. Index, Analyze, Search and Aggregate Your Data Using Elasticsearch (English Edition) Anurag Srivastava. Suppose I have a system that rotates logs from an app once per hour, and there are typically 100K-200K entries per hourly logfile. Paste that somewhere safe, as it will be used to configure the Filebeat Azure module configuration file, azure.yml. I haven't actually implemented a filebeats log ingest yet, but gathering info for our project design process, which includes getting api logs out to a new ELK stack for troubleshooting and reporting. rvice - Filebeat sends log files to Logstash or directly to Elasticsearch.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed